Mixing with Virtual Instruments: The Basics

DAW software, like Ableton Live, Logic, Pro Tools, Studio One, etc. isn’t just about audio. Virtual instruments that are driven by MIDI data produce sounds in real time, in sync with the rest of your tracks. It’s as if you had a keyboard player in your studio who played along with your tracks, and could play the same part, over and over again, without ever making a mistake or getting tired.

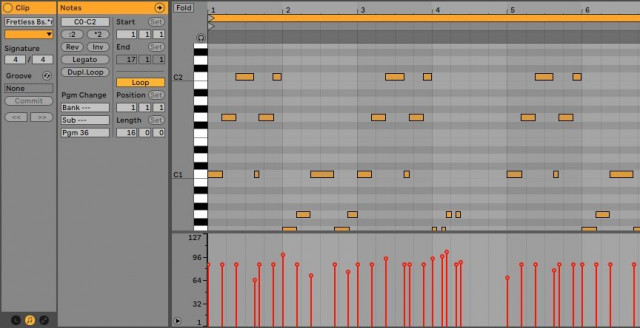

MIDI-compatible controllers, like keyboards, drum pads, mixers, control surfaces, and the like, generate data that represents performance gestures (fig. 1). These include playing notes, moving controls, changing level, adding vibrato, and the like. The computer then uses this data to control virtual instruments and effects.

Virtual Instrument Basics

Virtual instruments “tracks” are not traditional digital audio tracks, but instrument plug-ins, triggered by MIDI data. The instruments exist in software. You can play a virtual instrument in real time, record what you play as data, edit it if desired, and then convert the virtual instrument’s sound to a standard audio track—or let it continue to play back in real time.

Virtual instruments are based on computer algorithms that model or reproduce particular sounds, from ancient analog synthesizers, to sounds that never existed before. The instrument outputs appear in your DAW’s mixer, as if they were audio tracks.

Why MIDI Tracks Are More Editable than Audio Tracks

Virtual instruments are being driven by MIDI data, so editing the data driving an instrument changes a part. This editing can be as simple as transposing to a different key, or as complex as changing an arrangement by cutting, pasting, and processing MIDI data in various ways (fig. 2).

Because MIDI data can be modified so extensively after being recorded, tracks triggered by MIDI data are far more flexible than audio tracks. For example, if you record a standard electric bass part and decide you should have played the part with a synthesizer bass instead, or used the neck pickup instead of the bridge pickup, you can’t make those changes. But the same MIDI data that drives a virtual bass can just as easily drive a synthesizer, and the virtual bass instrument itself will likely offer the sounds of different pickups.

How DAWs Handle Virtual Instruments

Programs handle virtual instrument plug-ins in two main ways:

- The instrument inserts in one track, and a separate MIDI track sends its data to the instrument track.

- More commonly, a single track incorporates both the instrument and its MIDI data. The track itself consists of MIDI data. The track output sends audio from the virtual instrument into a mixer channel.

Compared to audio tracks, there are three major differences when mixing with virtual instruments:

- The virtual instrument’s audio is typically not recorded as a track, at least initially. Instead, it’s generated by the computer, in real time.

- The MIDI data in the track tells the instrument what notes to play, the dynamics, additional articulations, and any other aspects of a musical performance.

- In a mixer, a virtual instrument track acts like a regular audio track, because it’s generating audio. You can insert effects in a virtual instrument’s channel, use sends, do panning, automate levels, and so on.

However, after doing all needed editing, it’s a good idea to render (transform) the MIDI part into a standard audio track. This lightens the load on your CPU (virtual instruments often consume a lot of CPU power), and “future-proofs” the part by preserving it as audio. Rendering is also helpful in case the instrument you used to create the part becomes incompatible with newer operating systems or program versions. (With most programs, you can retain the original, non-rendered version if you need to edit it later.)

The Most Important MIDI Data for Virtual Instruments

The two most important parts of the MIDI “language” for mixing with virtual instruments are note data and controller data.

- Note data specifies a note’s pitch and dynamics.

- Controller data creates modulation signals that vary parameter values. These variations can be periodic, like vibrato that modulates pitch, or arbitrary variations generated by moving a control, like a physical knob or footpedal.

Just as you can vary a channel’s fader to change the channel level, MIDI data can create changes—automated or human-controlled—in signal processors and virtual instruments. These changes add interest to a mix by introducing variations.

Instruments with Multiple Outputs

Many virtual instruments offer multiple outputs, especially if they’re multitimbral (i.e., they can play back different instruments, which receive their data over different MIDI channels). For example, if you’ve loaded bass, piano, and ukulele sounds, each one can have its own output, on its own mixer channel (which will likely be stereo).

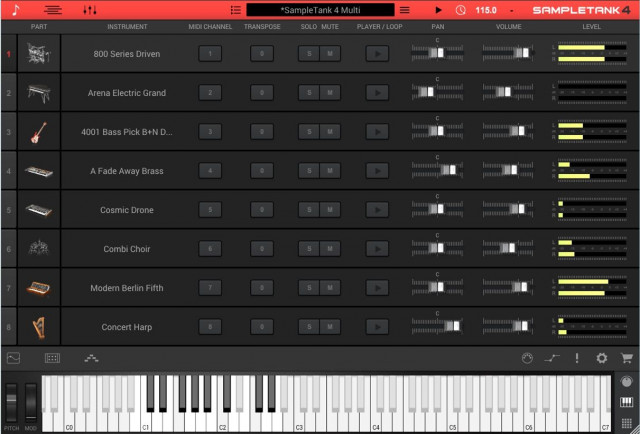

However, multitimbral instruments generally have internal mixers as well, where you can set the various instruments’ levels and panning (fig. 3). The mix of the internal sounds appears as a stereo channel in your DAW’s mixer. The instrument will likely incorporate effects, too.

Using a stereo, mixed instrument output has pros and cons.

- There’s less clutter in your software mixer, because each instrument sound doesn’t need its own mixer channel.

- If you load the instrument preset into a different DAW, the mix settings travel with it.

- To adjust levels, the instrument’s user interface has to be open. This takes up screen space.

- If the instrument doesn’t include the effects plug-ins needed to create a particular sound, then use the instrument’s individual outputs, and insert effects in your DAW’s mixer channels. (For example, using separate outputs for drum instruments allows adding individual effects to each drum sound.)

Are Virtual Instruments as Good as Physical Instruments?

This is a question that keeps cropping up, and the answer is…it depends. A virtual piano won’t have the resonating wood of a physical piano, but paradoxically, it might sound better in a mix because it was recorded with tremendous care, using the best possible microphones. Also, some virtual instruments would be difficult, or even impossible, to create as physical instruments.

One possible complaint about virtual instruments is that their controls don’t work as smoothly as, for example, analog synthesizers. This is because the control has to be converted into digital data, which is divided into steps. However, the MIDI 2.0 specification increases control resolution dramatically, where the steps are so minuscule that rotating a control feels just like rotating the control on an analog synthesizer.

MIDI 2.0 also makes it easier to integrate physical instruments with DAWs, so they can be treated more like virtual instruments, and offer some of the same advantages. So the bottom line is that the line between physical and virtual instruments continues to blur—and both are essential elements in today’s recordings.